What Is Web Scraping: The Ultimate Beginner’s Guide

Learn the basics of web scraping with this comprehensive overview.

Web scraping is a very powerful method for collecting data on a large scale and extracting valuable insights from it, whether for research, automation or business intelligence. This guide will give you a comprehensive overview of what web scraping is, how it works, and what you can do with it. Let’s get started!

What Is Web Scraping – the Definition

Web scraping refers to the process of collecting data from the web. It’s usually performed using automated tools which are built by hand, with the help of large language models, or bought from commercial providers.

Web scraping goes by various names. It can also be called web harvesting, web data extraction, screen scraping, or data mining. There are some subtle differences between these terms, but they’re often used interchangeably.

Why Scrape Data from the Web?

The main reason for web scraping is to quickly gather vast amounts of data that can be used to inform business decisions and power services.

First off, the automated nature of web scraping makes it faster than manual data retrieval. Let’s say you want to collect reviews from multiple websites, like Amazon and Google, to learn about a product. With web scraping, it takes minutes; manually, you’d spend hours or even days. With the data at hand, it can then be used for your business.

Some companies use it to research the market by scraping the product and pricing information of competitors. Others aggregate data from multiple sources – for example, flight companies – to present great deals. Still others scrape various public sources, like Yellow Pages and Crunchbase to find business leads.

However, one of the largest new use cases for web scraping is data for AI. An LLM takes a great deal of data to train, and the more data it has, the better its performance is likely to become. Web scraping delivers AI training data at scale. But it doesn’t end here.

It was quickly understood that an AI that relies only on its training data can be severely limited, especially when it comes to having up-to-date information. Therefore, continuously scraping more data powers RAG – retrieval-augmented generation, the method of supplying AIs with fresh information.

The most frequent uses of web scraping in business explained.

How Web Scraping Works

Web scraping involves multiple steps done in succession: finding the target URL, downloading the HTML, and extracting the necessary data from the HTML. Outside of the first step, the process should be automated.

Here’s how it looks in detail:

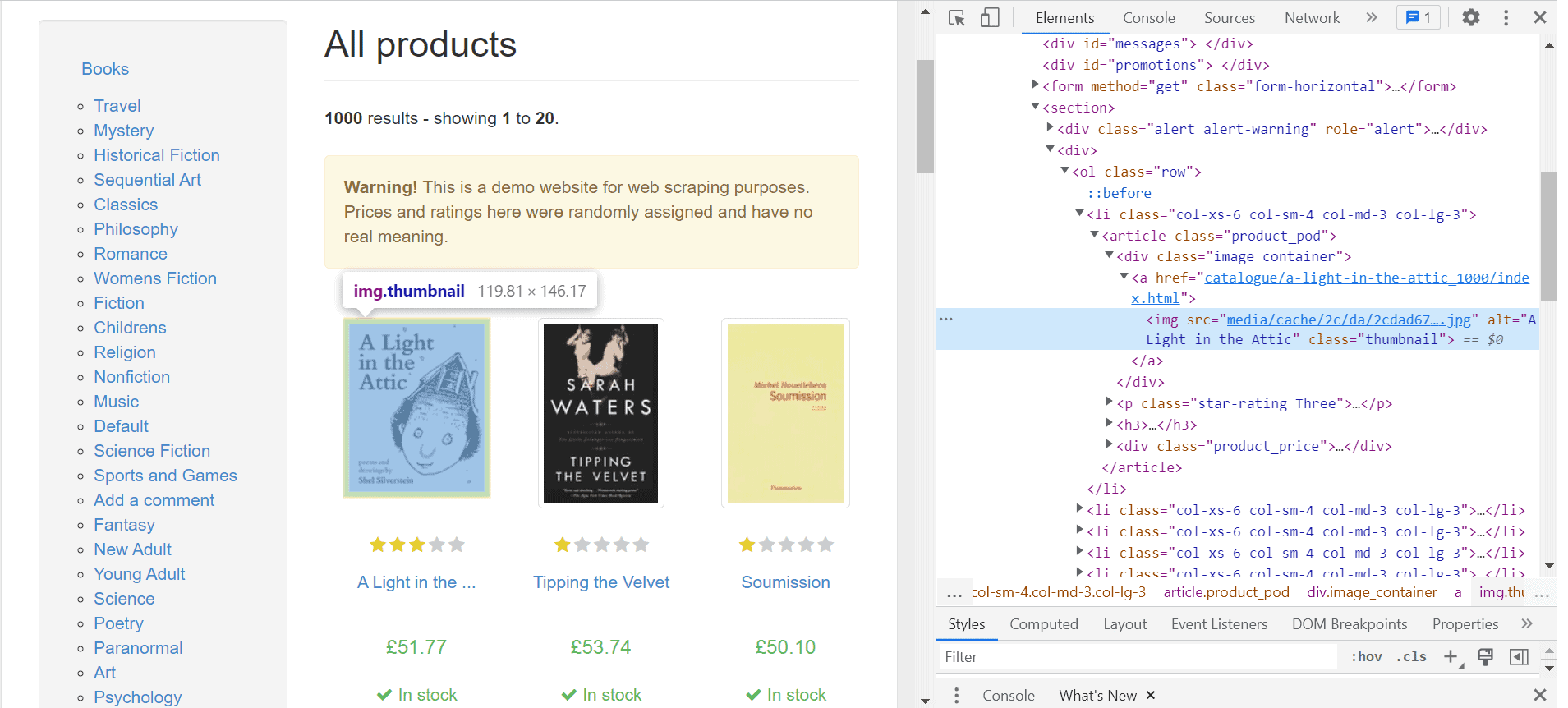

- Identify your target web pages. For example, you may want to scrape all products in a category of an e-commerce store. You can do it by hand or build something called a web crawler to find relevant URLs.

- Download their HTML code. Every webpage renders HTML in the browser; you can see how it looks by pressing the right mouse button in your web browser and selecting Inspect.

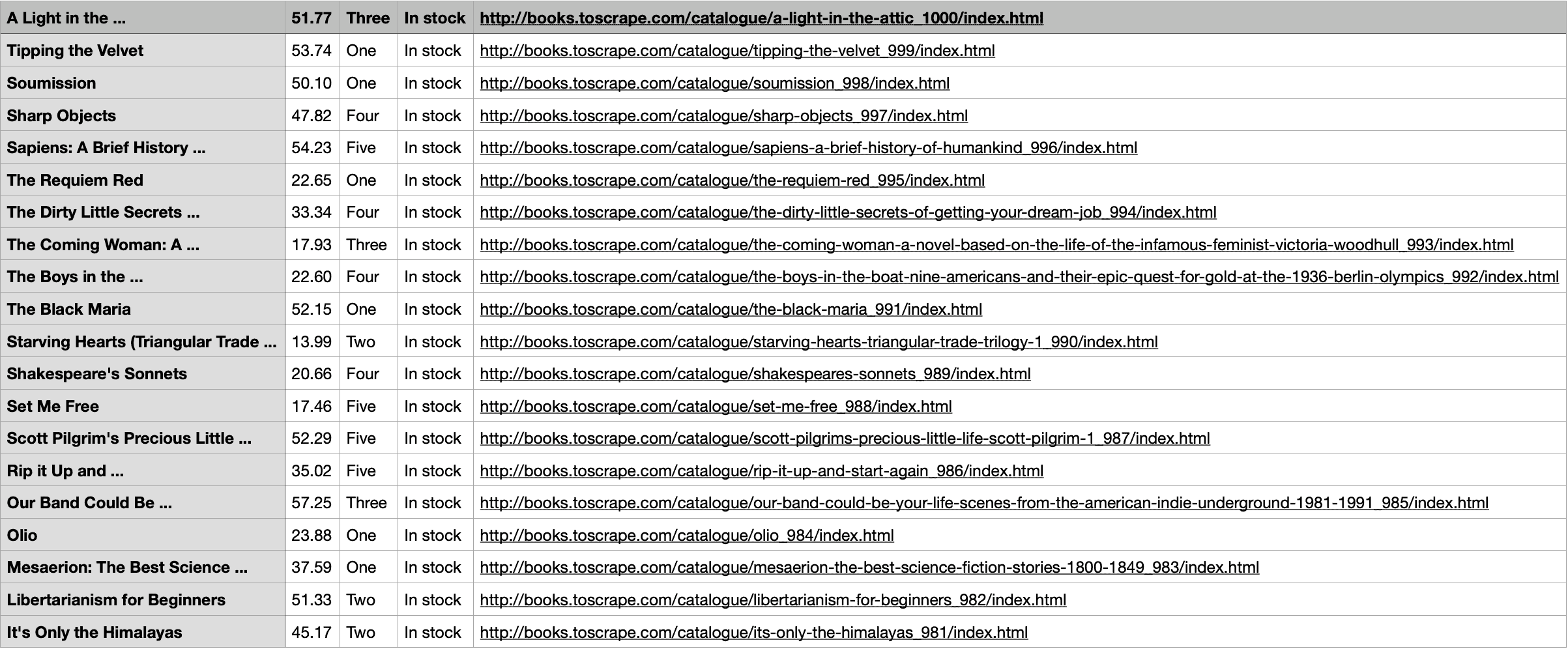

- Extract the data points you want. HTML is messy and has unnecessary information (like code describing the visual aspects of the page), so you’ll need to clean it up. This process is called data parsing. The desirable result is structured data in a .json, .csv file, or another readable format.

That is but the basic outline, and it gets more intricate. For example, if you’re scraping many pages from a single website, you’ll also need to set up proxies and obtain tools to bypass CAPTCHA challenges and other potential hurdles.

There are many tools to facilitate the data scraping process or offload some of the work from you. Ready-made web scrapers let you avoid working with the HTML and writing your own code; proxies can help you circumvent blocks; today, both of them are often combined into a single product. Data parsing is also often included in the package – sometimes it’s even AI-powered.

In fact, AI web scrapers exist as well, able to craft scraping logic based on your natural language instructions. Such packaged commercial tools are lowering the technical skill barrier for entry into scraping all the time.

At the same time, it is getting harder to start out by writing your own script as the scraping countermeasures are becoming more intricate all the time.

Is Web Scraping Legal?

Web scraping is not always welcome – or sometimes even ethical – affair. Scrapers often ignore the website’s guidelines (ToS and robots.txt), bring down its servers with too many requests, or even appropriate the data they scrape to launch a competing service. It’s no wonder many websites are keen on blocking any crawler or scraper in sight (except for, of course, search engines).

Still, web scraping as such is legal, with some limitations. Over the years, there have been a number of landmark cases. We’re no lawyers, but it has been established that web scraping a website is okay as long as the information is publicly available and doesn’t violate copyright, privacy, or access restrictions.

Since the question of web scraping isn’t always straightforward – each use case is considered individually – it’s wise to seek legal advice.

Web Scraping vs API

Web scraping is not the only method for getting data from websites. In fact, it’s not even the default one. The preferred approach is to use an API.

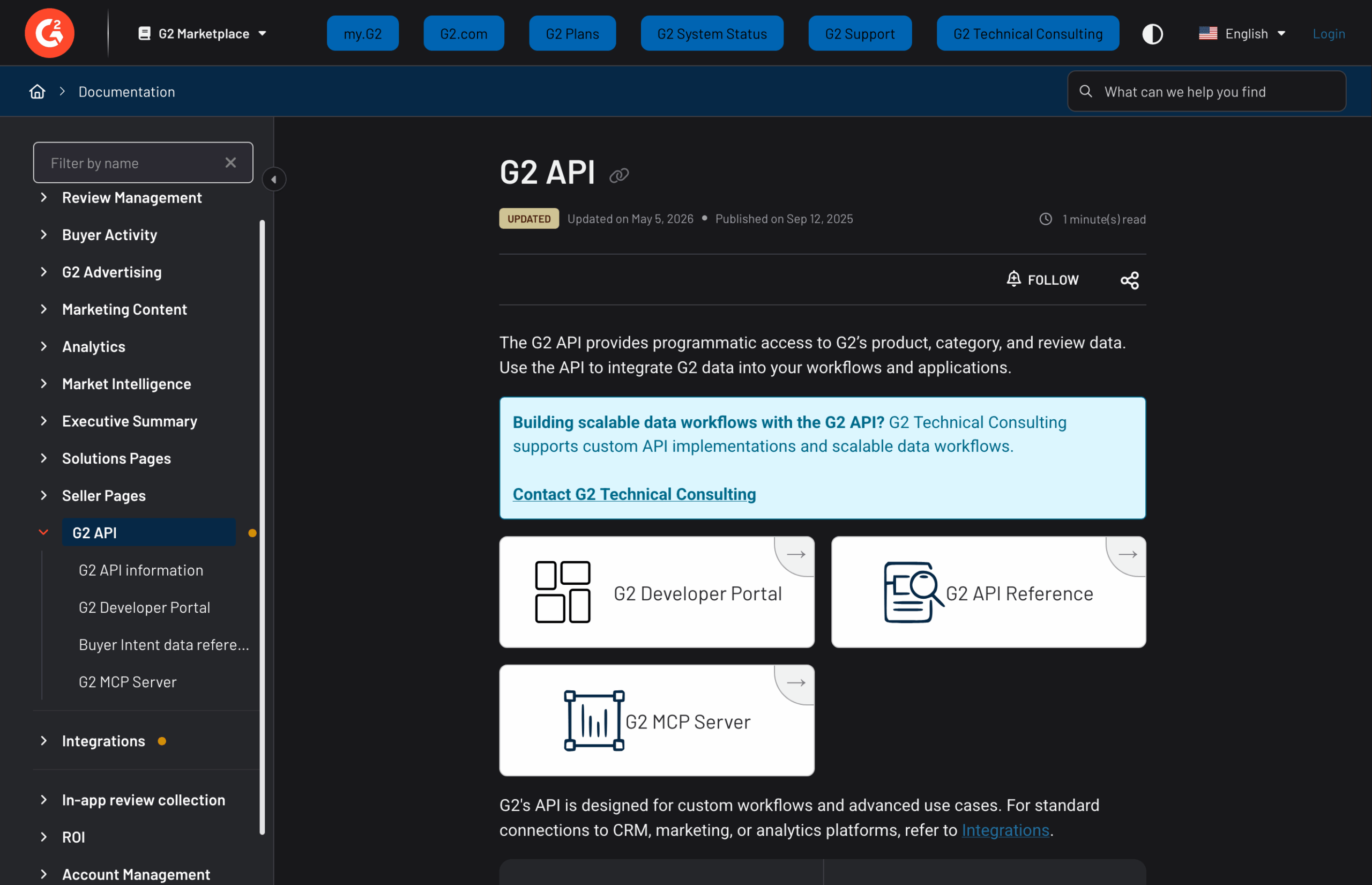

An API, or application programming interface, provides a method to interact with a certain website or app programmatically. Websites like G2 have official APIs for downloading their data.

Sometimes, a website may have an unofficial API, made by someone after discovering that the target site makes API calls. By intercepting and mimicking those network requests, you can replicate API-like functionality – it’s harder to accomplish than using an official one, but it returns structured data of the type you want.

However, APIs have disadvantages:

- Not all websites offer APIs; in some cases (like with Reddit), a website might stop supporting the API.

- The data provided by the API may not be the data you’re looking for: the original site owners are not obligated to cover all of the angles, after all.

- You often have to deal with limits on what data you can collect and how often.

- APIs tend to change or break more often than even web scraping scripts.

So, the main difference between web scraping and an API is that the former gives better access to data: whatever you can see in your browser, you can get. However, web scraping is often carried out without websites knowing about it. And when they do find out, they’re not very happy about it.

Choosing the Best Web Scraping Tool for the Job

There’s no shortage of web scraping tools in the market. If you want, you can even scrape with Microsoft Excel. Should you, though? Probably not. Web scraping tools can be divided into four categories: 1) custom-built, 2) ready-made, 3) web scraping APIs, and 4) AI web scrapers.

The most basic way is to build a scraper yourself. There are relevant libraries and frameworks in various programming languages, but web scraping with Python and Node.js are the most popular approaches. Here’s why:

- Python is very easy to read, and you don’t need to compile code. It has many high-performing web scraping libraries and other tools catered to any web scraping project you can think of. Python is used by both beginners and advanced users and has a strong community support.

- Node.js is based on JavaScript. It’s asynchronous by default, so it can handle concurrent requests. That means it works best in situations when you need to scrape multiple pages. Node.js is simple to deploy and has high-performing tools for dynamic scraping.

An introductory guide to Python web scraping with a step-by-step tutorial.

Everything you need to know about web scraping with Node.js and JavaScript in one place.

If you’re already familiar with, let’s say, PHP, you can use the skills for web scraping as well.

However, writing your own scraping script requires coding knowledge and development time. And it’s not a one-time investment: websites change all the time, breaking scraping logic.

For those without programming skills or time, you can go with ready-made web scraping tools. No-code web scrapers have everything configured for you and are wrapped in a nice user interface. They let you scrape without any or minimal programming knowledge, and the developer is the one tasked with fixing the code if it breaks. You can also try to use pre-collected datasets – collections of records that are organized (often arranged in a table) and prepared for further analysis.

The middle ground between the first two categories is web scraping APIs. In essence, these APIs handle proxies and the web scraping logic, so that you can extract data by making a simple API call to the provider’s infrastructure.

For those looking for additional support, the growing popularity of ChatGPT has made it a helpful tool in web scraping. While not perfect, it can write simple code and explain the logic behind it. It’s great for beginners learning the ropes or experienced scrapers looking to refine their skills.

Some providers have taken that approach a step further by integrating LLMs into their data scraping infrastructure as AI web scrapers. This allows using natural language when submitting scraping requests, with the process then running in the background to spit out parsed data that’s ready for use.

There’s no shortage of web scraping tools out there. Find your match.

Web Scraping Challenges

Web scraping isn’t easy; some websites do their best to ensure you can’t catch a break. Here are some of the obstacles you might encounter.

Modern websites use request throttling to avoid overloading the servers and unnecessary connection interruptions. The website controls how often you can send requests within a specific time window. When you reach the limit, your web scraper won’t be able to perform any further actions. If you ignore it, you might block your IP address.

Another challenge that can greatly hinder your web scraping efforts is CAPTCHAs. It’s a technique used to fight bots. They can be triggered because you’re making too many requests in a short time, 2) using low-quality proxies, or 3) not covering your web scraper’s fingerprint properly. Some CAPTCHAs are hard-coded into the HTML markup and appear at certain points, like registration. And until you pass the test, your scraper is out of work.

The most gruesome way a website can punish you for scraping is by blocking your IP address. However, there’s a problem with IP bans – the website’s owner can ban a whole range of IPs (256), so all the people who share the same subnet will lose access. This has made websites reluctant to use this method on IPs that are flagged as residential. After all, that’s the type of IP potential customers use. Consequently, this gave rise to residential and mobile proxy suppliers.

However, the fight doesn’t end there: anti-bot technology is advancing apace with the developments in the field of web scraping. Therefore, companies like Cloudflare are introducing pattern-seeking systems that block requests that match the behavior of bots and scrapers.

Learn how to deal with six common web scraping obstacles.

Web Scraping Best Practices

Here are some web scraping best practices to help your project succeed.

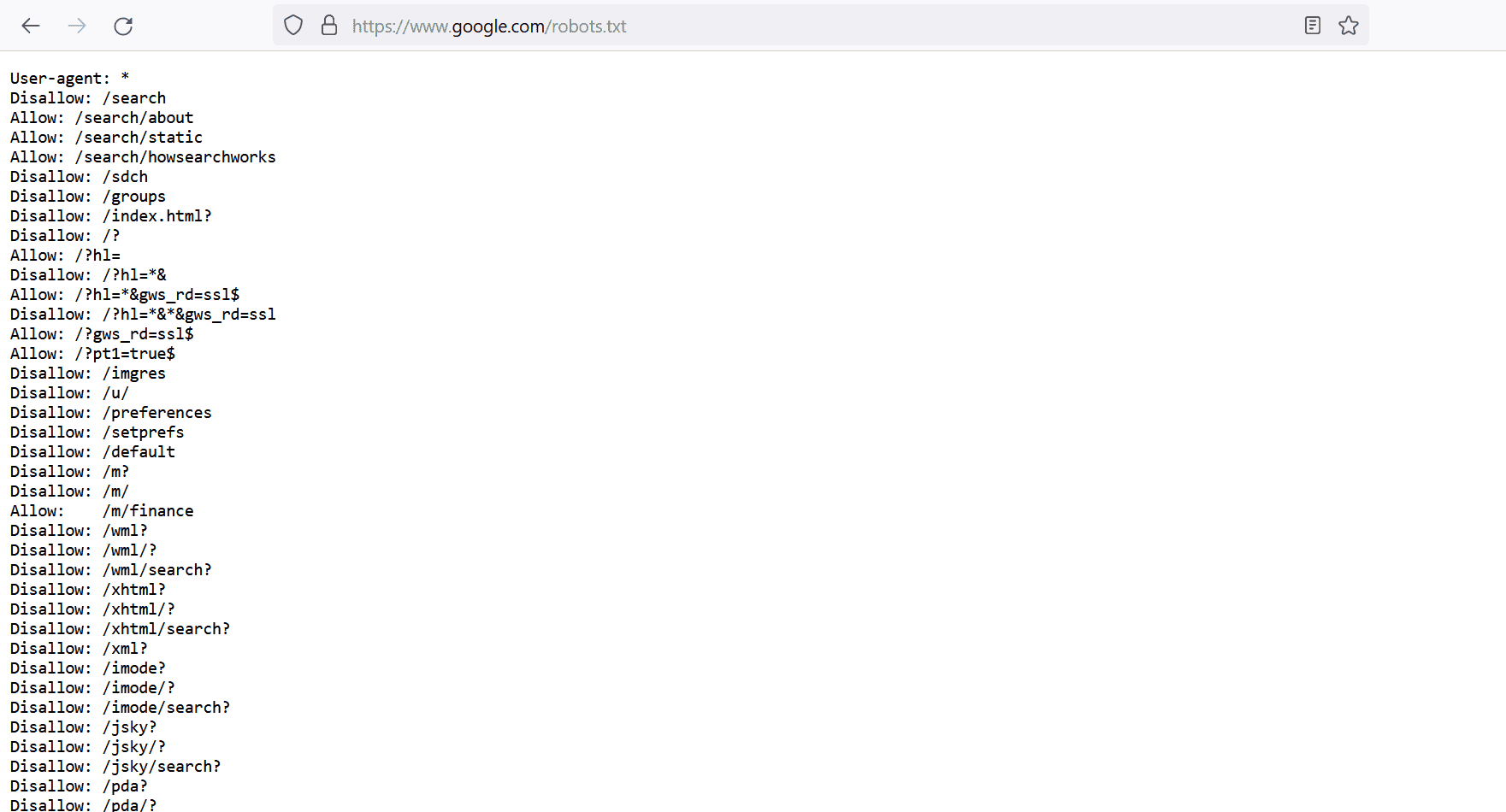

First and foremost, respect the website you’re scraping. You should understand data privacy regulations and respect the website’s terms of service. Also, most websites have a robots.txt file – it gives instructions on which content a crawler can access and what it should avoid.

Websites can track your actions. If you send too many requests, your actions will be red-flagged. So, you should act naturally by keeping random intervals between connection requests and reducing the crawling rate. And if you don’t want to burden both the website and your web scraper, don’t collect data during the peak hours.

Another critical step is to take care of your digital identity. Websites use anti-scraping technologies like CAPTCHAs, IP blocks, and request throttling. To avoid these and other obstacles, rotate your proxies and the user-agent. The first covers location hiding, and the latter – browser spoofing. So, every time you connect, you’ll have a “new” identity.

We’ve prepared some tips and tricks that will come in handy when gathering data.

Frequently Asked Questions About Webscraping

Web scraping involves automated data gathering online, usually targeting many pages on a single site. It commonly involves downloading the HTML code of the page and parsing it for relevant data points.

Websites block web scrapers since they generate traffic that a) can overload a website, while b) not being actual paying customers that the owner of the site would profit from.

ChatGPT is capable of limited web scraping; however, it is better at generating a script you can run to scrape a website.

The definition of “the best scraper” depends on what parameters are important for your use case. To help users like you make that decision, we benchmarked some of the most prominent scrapers on the market to create this list of the top web scraping tools.