What Is Data Parsing and What’s It For?

Learn about data parsing and its role in web scraping.

Data parsing is a very important part of web scraping. It helps transform the websites you scrape into workable data sets. This guide will teach you more about parsing of data and how it works.

Let’s get started.

What Is Data Parsing?

Broadly speaking, data parsing means analyzing a string of data, and then structuring it according to certain criteria. For example, you can take a sentence and then parse it by types of speech: nouns, verbs, adjectives, and so on.

In programming, the first part is called lexical analysis, where you turn a string into tokens. The second part is called syntactic analysis, where you use those tokens to create a parse tree that shows their relations to one another.

In web scraping, data parsing means three things:

- Identifying the data you need from an HTML file.

- Cleaning duplicate or broken information.

- Structuring that data in a way that’s easy to understand and work with. This often involves converting raw HTML data into .json, .csv, or a similar format.

Why Is Parsing of Data Needed in Web Scraping?

Let’s say you have a website to scrape. The easiest way to do this would be to use some library like Python’s Requests. You could then send a GET request and save the HTML source somewhere on your computer. Simple.

Is it, though? If you only need pricing data from a product page, how much value will you get from downloading the whole page? In this case, it might be easier to copy-paste the info by hand.

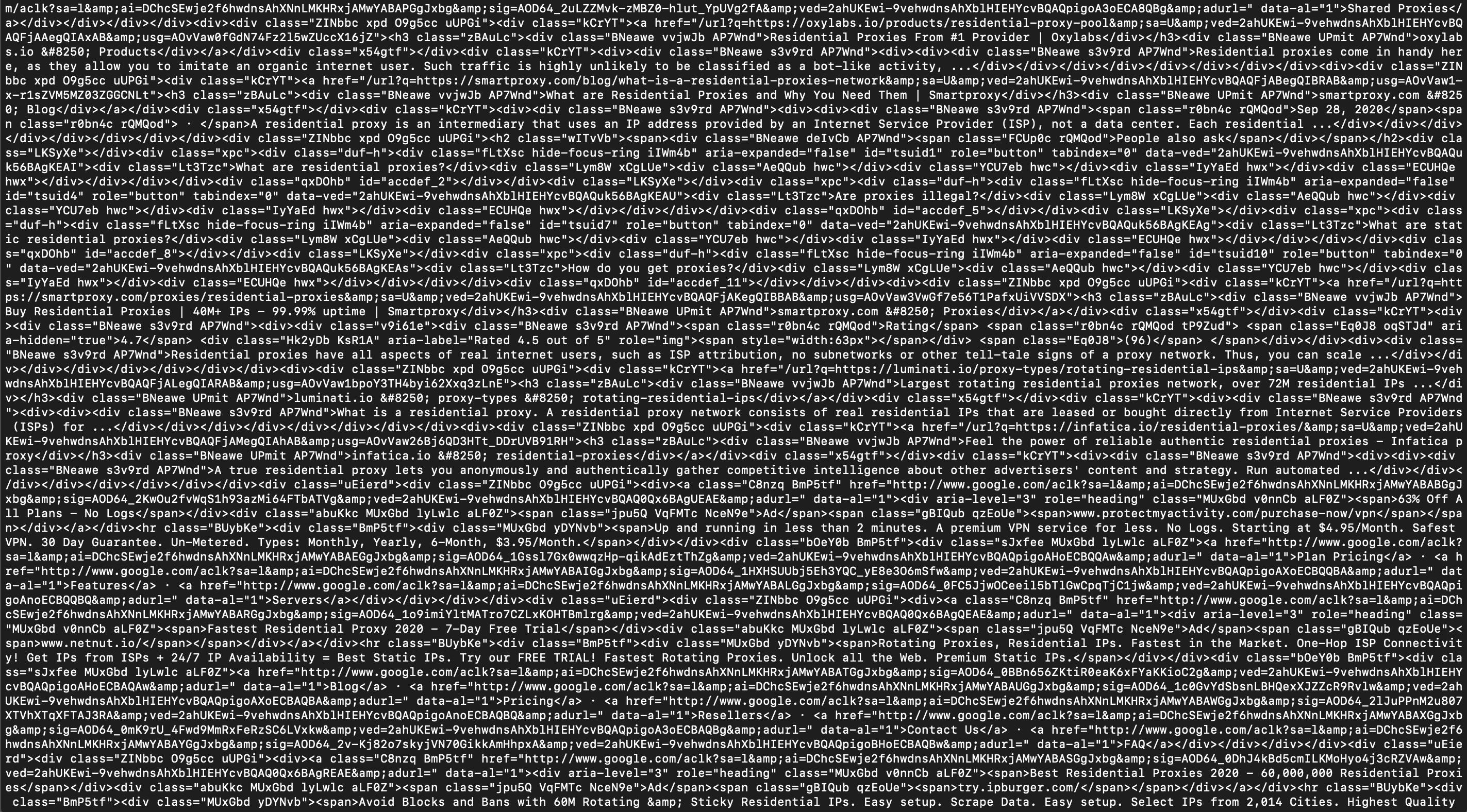

But let’s say that doesn’t bother you. Are you good now? Not exactly. The second problem is that relatively few HTML pages come neatly formatted when you download them. For example, here’s a Google Search page for the query “residential proxies” I downloaded without parsing. Do you find it easy to read?

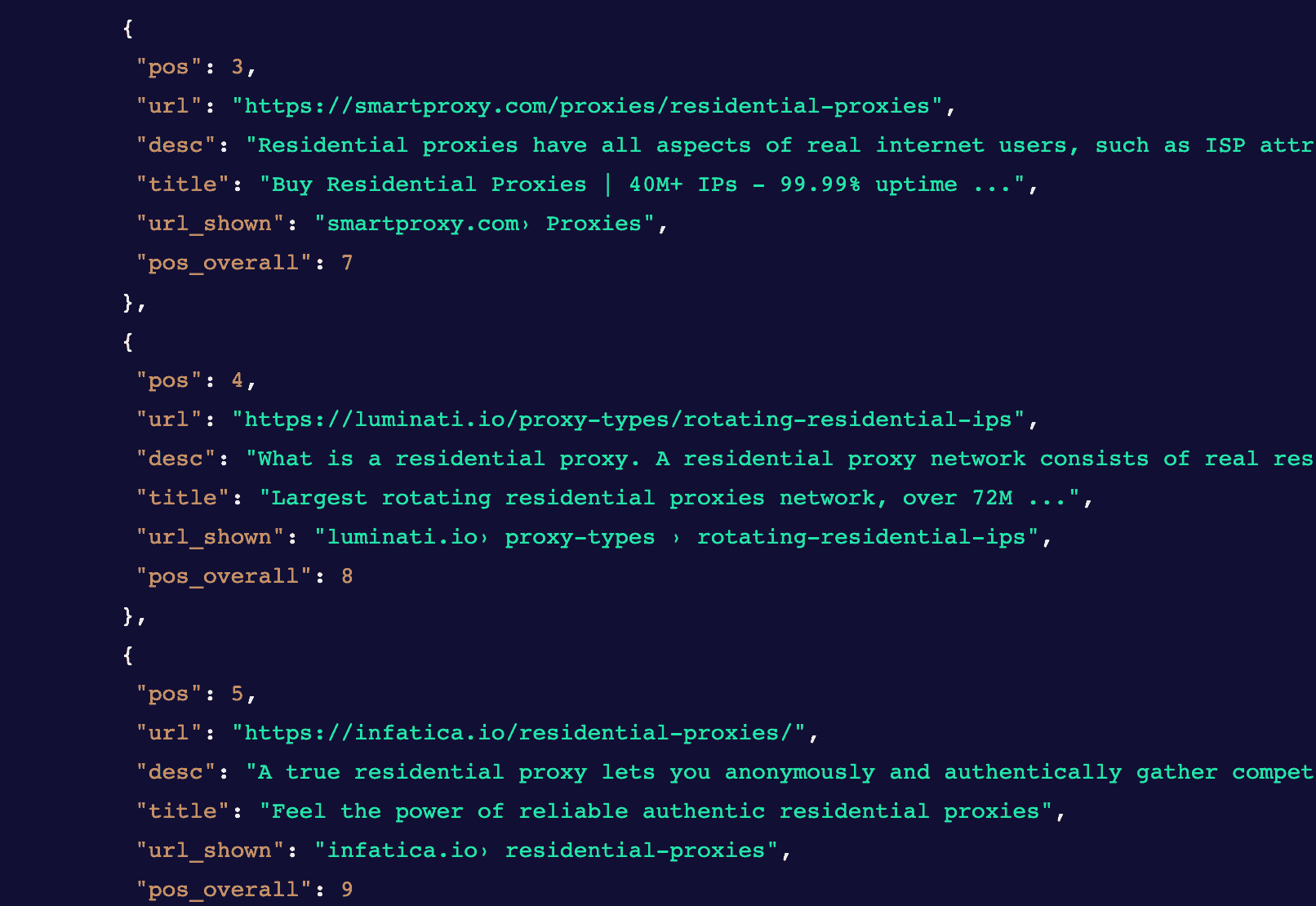

Thought so. What about now?

As you can see, the parsed page is much easier to understand. Not only does it leave out the irrelevant data (e.g., irrelevant tags), but it also neatly structures the information you do need – in this case, by converting it into .json.

Where to Get a Data Parser?

Find the best HTML parsers for Python.

If you’re looking to buy web scraping software, like a SERP API or visual scraper, it’s likely to have data parsing already built in. In this case, you don’t need an extra tool, but it really depends on the individual scraper. We have a list of the best SERP APIs with data parsers included.

If you would rather not build the parser by hand, the newest hotness on the block are AI parsers. An AI data parser will either write you a parsing algorithm by using ad LLM, or it will task the LLM itself with parsing the page.

How to Parse Data

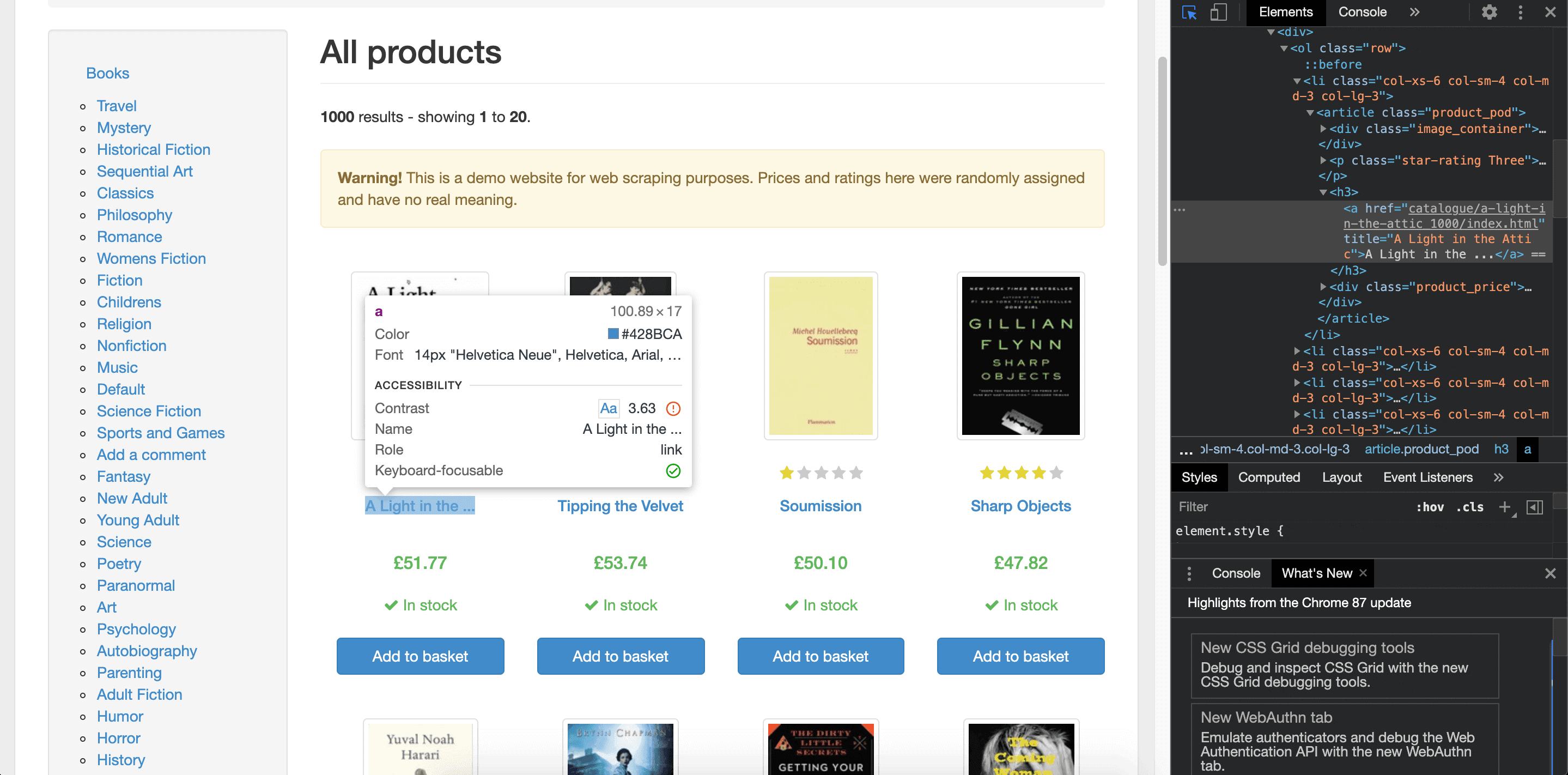

To parse a simple webpage, you’ll need to find the elements you want to scrape. For this, you should use the Inspect Element tool.

You can do this by opening the page in your browser, pressing the right mouse button, and selecting “Inspect”. This will open the website’s DOM tree. For example, in the screenshot below, the books’ titles fall under the H3 element, inside the <a> tag.

Once you’ve identified this information, you’ll have to give instructions to your parser where to find it. The two main ways to do this are using either CSS selectors or XPath. Further steps depend on your parsing tool.

In any case, you’ll first have to download the HTML source using an HTTP client, then extract the elements you’ve selected with a parser, and finally store the output in a format of your choice. If you’re working with multiple pages, you’ll also have to take care of the crawling logic to navigate through them.

We compiled a list of the best Python HTTP clients for you to try.

Data Parsing Challenges

On a small scale, data parsing can be relatively straightforward. But as with all web scraping related things, it can quickly spiral out of control. Here are some data parsing challenges you can expect:

- Changing page structure. Large websites, especially e-commerce sites, tend to change their HTML often. Once that happens, your parser will break, and you’ll have to adjust it.

- Inconsistent formatting. The data point you want to extract can have different formatting on different pages. You might have to build custom parsing logic to identify and unify it.

- JavaScript-generated HTML. Such pages lack the usual attributes like class, making it harder to navigate and extract the right data.

Frequently Asked Questions About Data Parsing

To parse data means to analyze and then structure it according to specific rules. In the context of web scraping, parsing means extracting information from the HTML source and formatting it for further use.

Once you’ve parsed data, you can use it for further analysis.

For parsing in particular – no. But you will need proxies for your web scraping project if it involves making many connection requests to a website.