How to scrape a table using Beautifulsoup

Important: we’ll use a real-life example in this tutorial, so you’ll need requests and Beautifulsoup libraries installed.

Step 1. Let’s start by importing the Beautifulsoup library.

from bs4 import BeautifulSoup

Step 2. Then, import requests library.

import requests

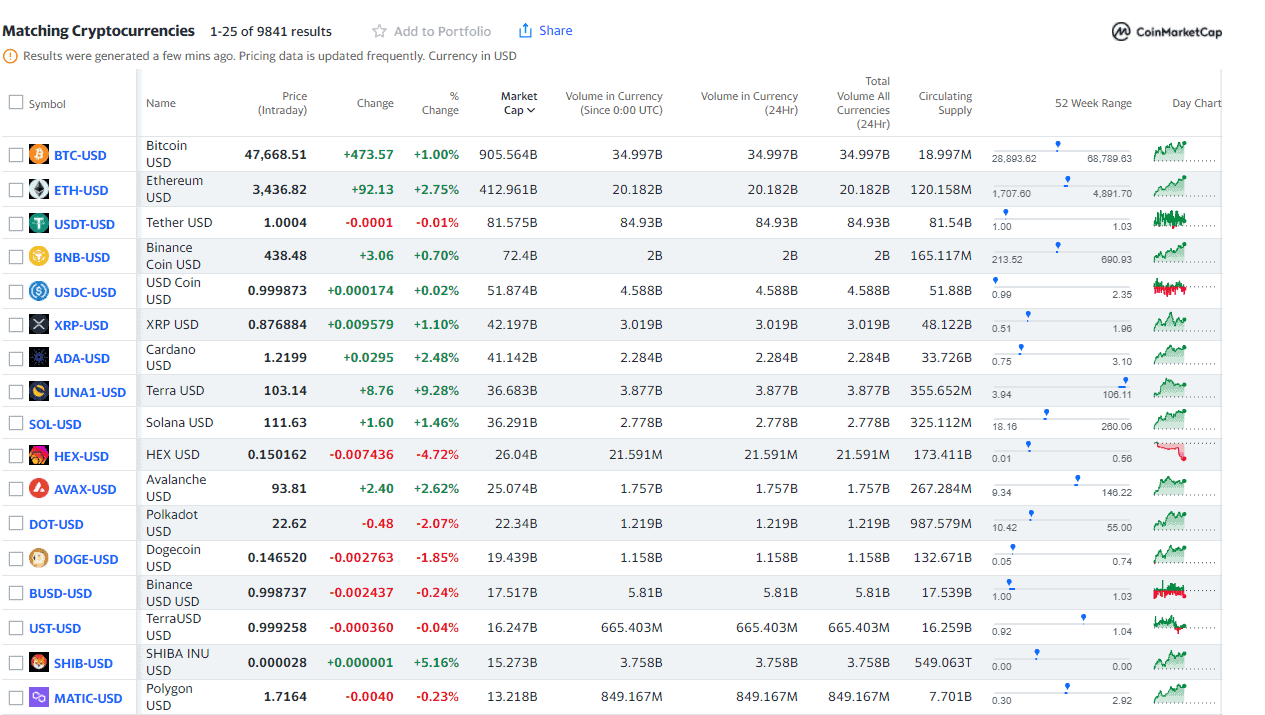

Step 3. Get the source code of your target landing page. We’ll be using Yahoo in this example.

r=requests.get("https://finance.yahoo.com/cryptocurrencies/")

Universally applicable code would look like this:

r=requests.get("Your URL")

Step 4. Convert HTML code into a Beautifulsoup object named soup.

soup=BeautifulSoup(r.content,"html.parser")

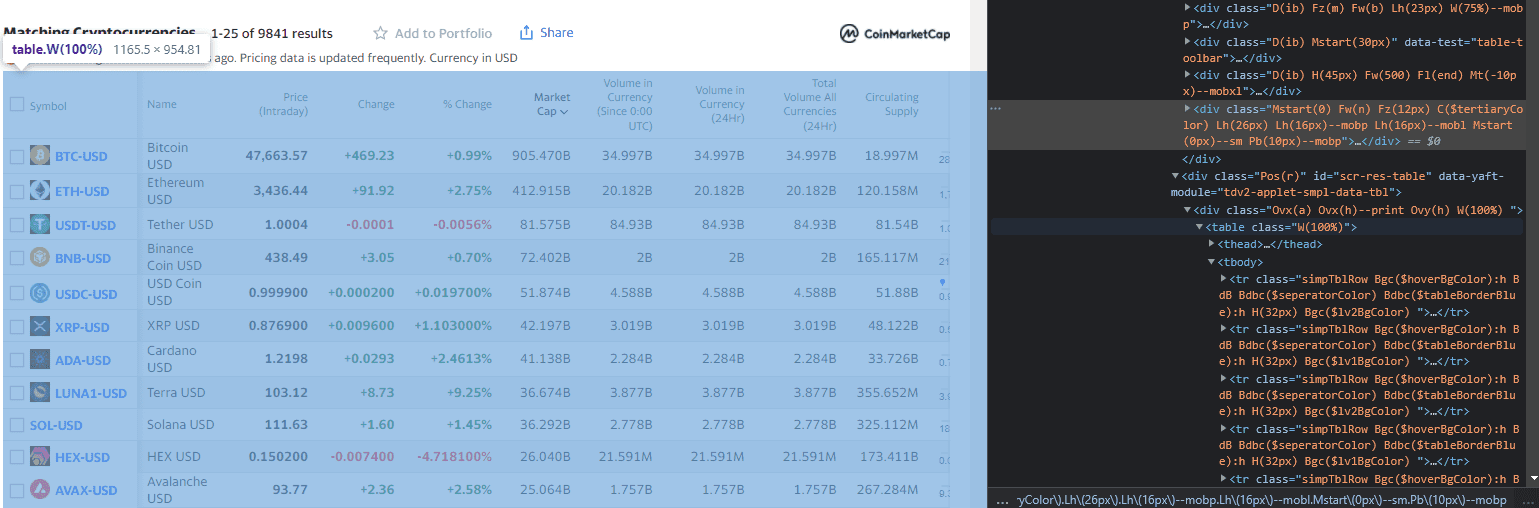

Step 5. Then, inspect the page source. See the table has a class of W(100%parser).

NOTE: A class can specify the scraped table in case there are multiple different ones on the same page.

Step 6. Parse the page content with BeautifulSoup, find the table in the HTML content and assign the whole table element to the table_element variable.

soup = BeautifulSoup(r.content, "html.parser")

table_element = soup.find("table", class_="W(100%)")

NOTE: The goal is to scrape all the rows from the target table.

Step 7. Initialize a new list variable to save the data into.

output_list = []

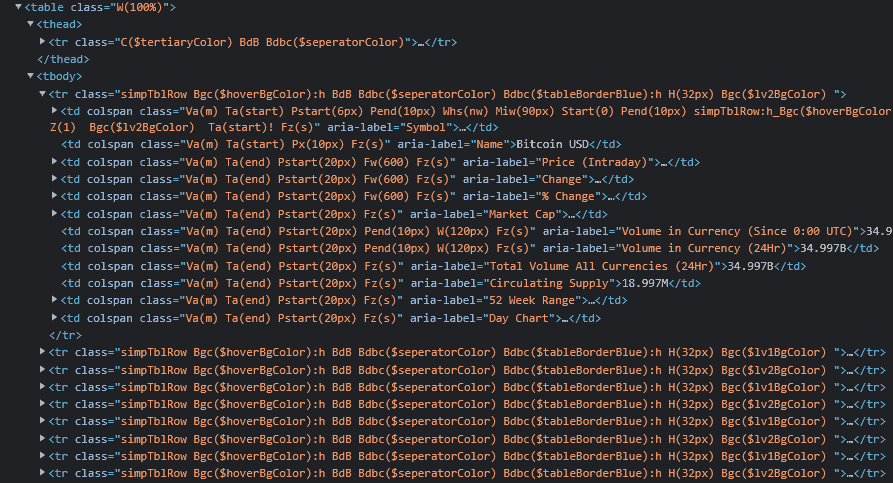

Step 8. Search for all tr tags in the table to get all the rows from the table_element that was saved earlier. You’ll also get the header row and all the variables.

table_rows = table_element.find_all("tr")

NOTE: In this case, it’s also possible to get specific column values by referring to the aria-label attributes since they are present, but that won’t always be the case, so stick with a universal approach.

Step 9. The following for loop will iterate through all rows you got from the table and get all the children for each row. Each child is a td element in the table. After getting the children, iterate through the row_children list and append the text values of each element into a row_data list to keep it simple.

for row in table_rows:

row_children = row.children

row_data = []

for child in row_children:

row_data.append(child.get_text())

output_list.append(row_data)

Step 10. Let’s display the results.

for row in output_list:

print (row)

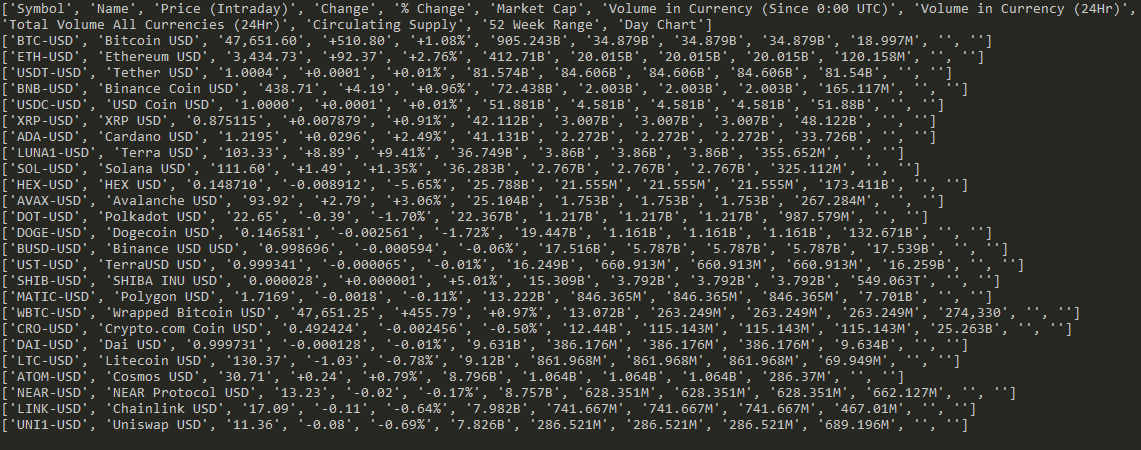

What you got is a list of lists, each containing 12 elements that correspond to table columns. The first row contains the table headers.

NOTE: This makes it easy to format the output in CSV/ JSON and write the results to an output file. Also, to convert to a Pandas DataFrame and use the data for some analysis.

Results:

Congratulations, you’ve learned how to scrape a table using Beautifulsoup. Here’s the full script:

from bs4 import BeautifulSoup

import requests

r = requests.get("https://finance.yahoo.com/cryptocurrencies/")

soup = BeautifulSoup(r.content, "html.parser")

table_element = soup.find("table", class_="W(100%)")

output_list = []

table_rows = table_element.find_all("tr")

for row in table_rows:

row_children = row.children

row_data = []

for child in row_children:

row_data.append(child.get_text())

output_list.append(row_data)

for row in output_list:

print (row)